What Is Search Engine Indexing?

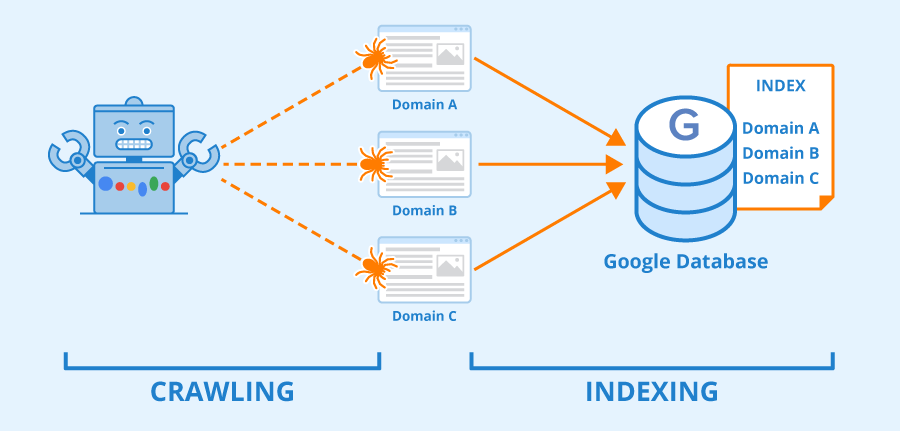

Search engine indexing refers to the process where a search engine (such as Google) organizes and stores online content in a central database (its index). The search engine can then analyze and understand the content, and serve it to readers in ranked lists on its Search Engine Results Pages (SERPs).

Before indexing a website, a search engine uses “crawlers” to investigate links and content. Then, the search engine takes the crawled content and organizes it in its database:

Image source: Seobility – License: CC BY-SA 4.0

We’ll look closer at how this process works in the next section. For now, it can help to think of indexing as an online filing system for website posts and pages, videos, images, and other content. When it comes to Google, this system is an enormous database known as the Google index.

How Does a Search Engine Index a Site?

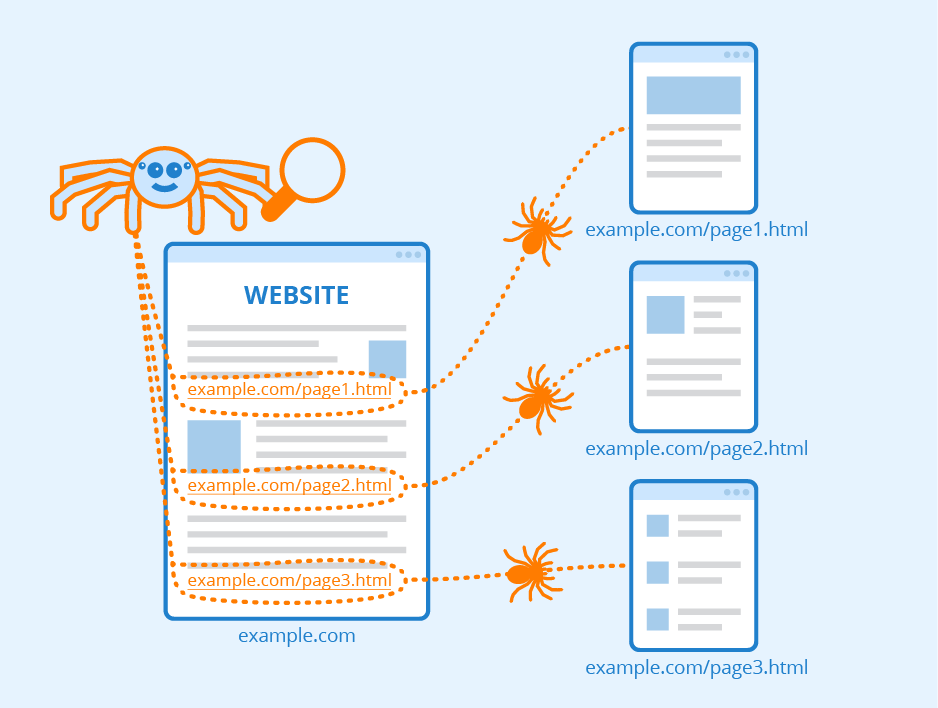

Search engines like Google use “crawlers” to explore online content and categorize it. These crawlers are software bots that follow links, scan webpages, and gain as much data about a website as possible. Then, they deliver the information to the search engine’s servers to be indexed:

Image source: Seobility – License: CC BY-SA 4.0

Every time content is published or updated, search engines crawl and index it to add its information to their databases. This process can happen automatically, but we speed it up by submitting sitemaps to search engines. These documents outline your website’s infrastructure, including links, to help search engines crawl and understand your content more effectively.

Search engine crawlers operate on a “crawl budget.” This budget limits how many pages the bots will crawl and index on your website within a set period. (They do come back, however.)

Crawlers compile information on essential data such as keywords, publish dates, images, and video files. Search engines also analyze the relationship between different pages and websites by following and indexing internal links and external URLs.

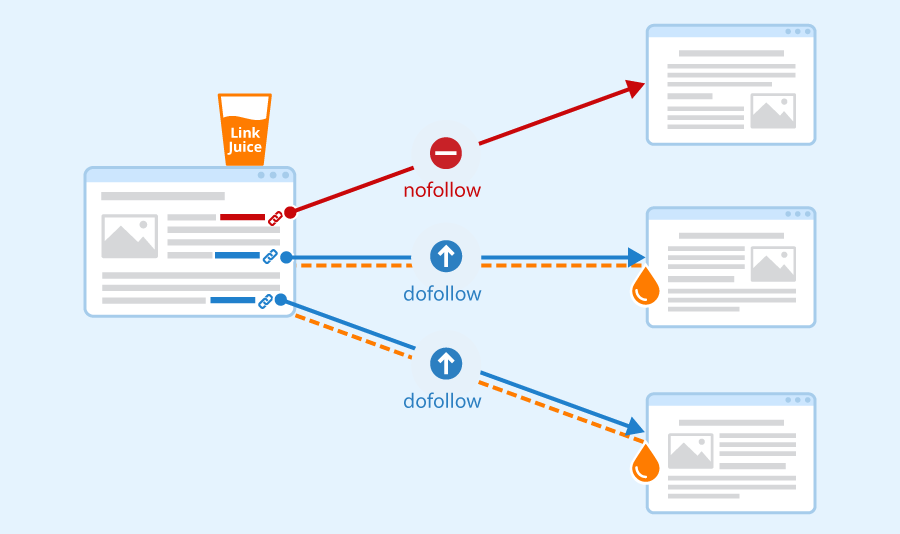

Note that search engine crawlers won’t follow all of the URLs on a website. They will automatically crawl dofollow links, ignoring their nofollow equivalents. Therefore, you’ll want to focus on dofollow links in your link-building efforts. These are URLs from external sites that point to your content.

If external links come from high-quality sources, they’ll pass along their “link juice” when crawlers follow them from another site to yours. As such, these URLs can boost your rankings in the SERPs:

Image source: Seobility – License: CC BY-SA 4.0

Furthermore, keep in mind that some content isn’t crawlable by search engines. If your pages are hidden behind login forms, passwords, or you have text embedded in your images, search engines won’t be able to access and index that content. (You can use alt text to have these images appear in searches on their own, however.)

1. Sitemaps

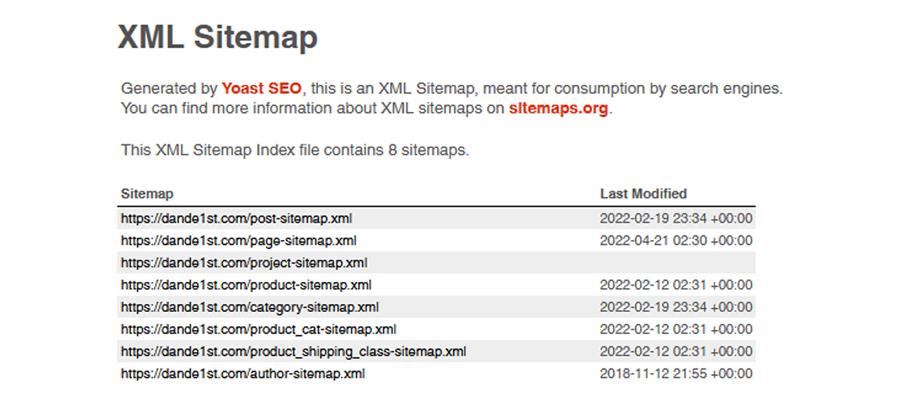

Keep in mind that there are two kinds of sitemaps: XML and HTML. It can be easy to confuse these two concepts since they’re both types of sitemaps that end in -ML, but they serve different purposes.

HTML sitemaps are user-friendly files that list all the content on your website. For example, you’ll typically find one of these sitemaps in a site’s footer. Scroll all the way down on Apple.com, and you will find this, an HTML sitemap:

This sitemap enables visitors to navigate your website easily. It acts as a general directory, and it can positively influence your SEO and provide a solid user-experience (UX).

In contrast, an XML sitemap contains a list of all the essential pages on your website. You submit this document to search engines so they can crawl and index your content more effectively:

2. Google Search Console

We use the Google Search Console as an essential tool to submit sitemaps of website’s developed by dande1st.com.

In the console, you can access an Index Coverage report, which tells you which pages have been indexed by Google and highlights any issues during the process. Here you can analyse problem URLs and troubleshoot them to make them “indexable”.

Additionally, you can submit your XML sitemap to Google Search Console. This document acts as a “roadmap,” and helps Google index your content more effectively. On top of that, you can ask Google to recrawl certain URLs and parts of your site so that updated topics are always available to your audience without waiting on Google’s crawlers to make their way back to your site.

3. Alternative Search Engine Consoles

Although Google is the most popular search engine, it isn’t the only option. Limiting yourself to Google can close off your site to traffic from alternative sources such as Bing:

Keep in mind that each of these consoles offers unique tools for monitoring your site’s indexing and rankings in the SERPs. Therefore, we use them to expand your SEO strategy.